The Epistemology of Enterprise Software Procurement, or: Why Your Vendor Call Is Actually Kabuki Theater

There is a marvelous paradox at the heart of enterprise software evaluation, which is that everyone involved knows the process is performative nonsense, yet everyone continues to execute the performance with utmost sincerity, like method actors who've forgotten they're in a play.

Last week I conducted such a performance. I was interviewing a potential buyer about their evaluation of a quality assurance system for their manufacturing operation. This was customer discovery for an investment decision, which is a fancy way of saying: I needed to figure out if a software company was actually solving a problem people would pay for.

The conversation lasted thirty minutes. In that span, I extracted the entire taxonomy of procurement dysfunction, presented not as dysfunction at all, but as Standard Operating Procedure, Complete With Capitalized Importance.

And the thing is, I didn't extract it through clever interrogation techniques or strategic questioning frameworks. I extracted it by asking obvious questions that apparently nobody else asks.

On the Contingent Nature of Supposed Necessity

Here is how the buyer came to be evaluating this product: they weren't.

Which is to say, they were perfectly content with their existing vendor, a purveyor of rules-based defect detection systems, until three events converged with suspicious synchronicity. First, a mutual acquaintance made an introduction. Second, their annual budget review loomed. Third, a competitor suffered a spectacular and public manufacturing failure, the kind that generates breathless trade publication coverage and precipitous stock declines.

Only then did the "need" materialize.

This matters because procurement methodology presumes linear causality. A company identifies a deficiency. The company researches solutions. The company evaluates vendors through systematic comparison. The company selects the optimal solution based on objective criteria.

This is fiction. Lovely fiction, the kind that populates business school case studies and vendor marketing decks, but fiction nonetheless.

The reality is messier and more human. The buyer wasn't dissatisfied. They didn't wake up cataloging the inadequacies of their incumbent vendor. The evaluation happened because three things happened at once, and when three things happen at once, you get a meeting.

You cannot manufacture this. You can manufacture urgency, certainly. Vendors do it constantly, with their webinars on emerging threats and their whitepapers on the cost of inaction. But you cannot manufacture the specific convergence of warm introduction, budget availability, and crisis-induced executive attention that actually opens procurement processes.

The Socratic Method, Accidentally Deployed

I started the conversation with a question of disarming simplicity: "Can you walk me through your current setup?"

Not "What problems are you experiencing?" Not "What outcomes would constitute success?" Just: tell me what you do.

This is where most evaluations commit their original sin. They treat current-state inquiry as throat-clearing, the obligatory preamble before the real conversation about requirements and capabilities begins.

But the buyer proceeded to talk for seven minutes about their existing workflow, and those seven minutes contained, in compressed form, their entire evaluation framework, their organizational politics, their regulatory constraints, their operational philosophy, and their tolerance for risk.

They explained their current system: a rules-based quality assurance platform that flags defects by comparing outputs to documented failure patterns. Reactive, not predictive. Effective only against known problems. Useless against novel defects until those defects have occurred, been documented, been analyzed, and been programmed into the detection rules.

Then, without me asking, they volunteered the coverage gaps. The pricing structure. The internal processes they'd built as workarounds. The regulatory minimums that constrained their options.

I didn't ask for this. They offered it because the question was sufficiently open-ended that they felt comfortable thinking aloud rather than reciting prepared talking points.

This is the thing about information gathering: people reveal their actual decision-making process when you ask them to explain what they're doing. They hide it when you ask them to justify what they want.

The Budget Exists, It Just Doesn't Exist For You

Midway through, the buyer said something that deserves to be engraved on every CFO's desk: "We'd like to upgrade to something better, but we don't have budget."

Most people hear this as binary. Budget exists or budget doesn't. The evaluation proceeds or terminates accordingly.

But look at the structure of that sentence. They want it. They acknowledge it's better. They can, presumably, calculate ROI. Yet budget doesn't exist.

This is not a statement about money. This is a statement about priorities.

The budget exists. It's just allocated to other things. Things that fall into the sacred category of Must-Haves rather than the profane category of Nice-to-Haves. The buyer's problem isn't convincing anyone that the new system works better. Their problem is that "works better" competes with fifty other things that also work better, and when finance reviews the list, incremental improvements die first.

This is why product-market fit, that Silicon Valley mantra, proves insufficient in enterprise sales. You need budget-market fit. You need to solve a problem that finance categorizes as mandatory: regulatory compliance, existential risk, revenue generation.

Anything else is discretionary. And discretionary is another word for "not happening this year."

The Buyer's Secret Taxonomy

When I asked about competitive evaluation, something interesting happened: the buyer didn't compare vendors linearly.

They'd talked to three alternative vendors. But rather than scoring them on the same rubric, they first divided the market into two completely different categories:

Category One: Rules-Based Systems. Traditional quality control that detects defects by checking against documented failure databases. You can only catch problems you've seen before.

Category Two: Predictive Systems. Machine learning platforms that spot anomalies without needing prior examples. You can catch new problems, but nobody really understands how it works.

Only after making this categorical split did they subdivide further, separating the predictive vendors by approach: pattern recognition versus process simulation.

This meant their evaluation criteria had nothing to do with what vendors thought they were competing on.

They didn't care about feature completeness. They didn't care about implementation timelines. They didn't care about support quality, except as factors within their chosen category.

What they cared about was something more fundamental: should we prevent defects by looking backward at historical data, or forward using statistical models?

The vendor's feature comparison spreadsheet is useless if the buyer is making a different decision than the vendor thinks they're making.

The Two Probabilities That Matter

Near the end, I asked two extremely precise questions:

- If budget weren't a constraint, what's the probability you'd select this solution?

- Given actual budget constraints, what's the probability you purchase within twelve months?

First answer: "If we had unlimited money, yes. It fills a real gap."

Second answer: "Without regulatory pressure, probably not this year. We tried to get executive approval. They said wait."

This is the gap that kills enterprise sales.

The buyer wants it. The buyer believes it works. The buyer can articulate value.

But wanting competes with dozens of other wants. And in enterprise software, wants rarely convert to purchases without external force: regulatory requirement, competitive threat, or a close call that scares executives into action.

The buyer's executive team didn't dispute the value. They just declined to prioritize it. Because prioritization is zero-sum, and this particular want wasn't urgent enough to displace other wants competing for the same finite budget.

These two probability questions, by the way, are the entire framework. Everything else is noise.

What This Actually Means

If you're responsible for evaluating enterprise software, or if you're trying to understand whether a software company has a real business, here's what changes:

Start with current state, not requirements. The best information came from asking the buyer to describe their existing setup, not from asking about their problems. People reveal their decision framework when you ask them to explain their workflow. They hide it when you ask them to list their pain points.

Find the trigger, not just the criteria. This buyer wouldn't purchase without either regulatory mandate or executive fear following a near-miss. Technical superiority didn't matter. Understanding what converts "nice to have" into "must have" matters more than understanding feature requirements.

Map their mental categories first. Buyers don't compare vendors until they've first split the market into fundamental types. This buyer divided solutions into "rules-based" versus "predictive." If you're evaluating without understanding how they've categorized the market, you're comparing things that aren't comparable in their minds.

Budget-market fit beats product-market fit. A solution can be objectively better and still never get purchased because it solves a discretionary problem. Unless you're addressing mandatory spend (compliance, existential risk, revenue), you're competing with every other "nice to have," and nice-to-haves get killed in budget reviews.

Switching costs are about process, not product. The buyer wasn't locked into their incumbent by contracts or data migration complexity. They were locked in by the operational processes they'd built around that product. Evaluation has to address "how do we rebuild our workflows," not just "how do we move our data."

Everyone's Performing and Nobody's Admitting It

The conversation ended with the buyer recommending a fourth vendor I hadn't researched: a company using AI to automatically collect supporting evidence when flagging quality issues.

Think about what just happened: I called to learn about one product, and the buyer actively helped me understand competitive alternatives.

This is what actual discovery looks like. The buyer wasn't defensive. They weren't protecting information. They genuinely tried to help me understand the market, because I structured the conversation as learning rather than selling.

Most vendor calls are theater. The buyer performs interest. The seller performs concern. Both sides optimize for "moving the process forward," which is code for "not saying anything that kills the deal but also not committing to anything."

But the conversations that reveal truth come from forgetting to perform and just talking.

Thirty minutes. No demo. No feature comparison. No slide deck.

Just asking someone what they actually do and why they do it that way, then listening without trying to immediately sell them something or prove you already know the answer.

That's the whole thing.

Which is probably why almost nobody does it.

3 HEADLINE OPTIONS (ranked by engagement potential):

- "I Interviewed a Software Buyer for 30 Minutes. The Entire Process Is Performance Art." ★★★★★

- "The Epistemology of Enterprise Software Procurement, or: Why Your Vendor Call Is Actually Kabuki Theater" ★★★★★

- "The Budget Exists, It Just Doesn't Exist For You: What I Learned Interviewing Enterprise Buyers" ★★★★

3 PULL QUOTES FOR SOCIAL:

- "The budget exists. It's just allocated to other things. 'We don't have budget' isn't a statement about money. It's a statement about priorities."

- "People reveal their actual decision-making process when you ask them to explain what they're doing. They hide it when you ask them to justify what they want."

- "I asked two probability questions: one assuming unlimited budget, one assuming reality. The gap between those two answers is the entire enterprise software market."

IMAGE GENERATION PROMPT:

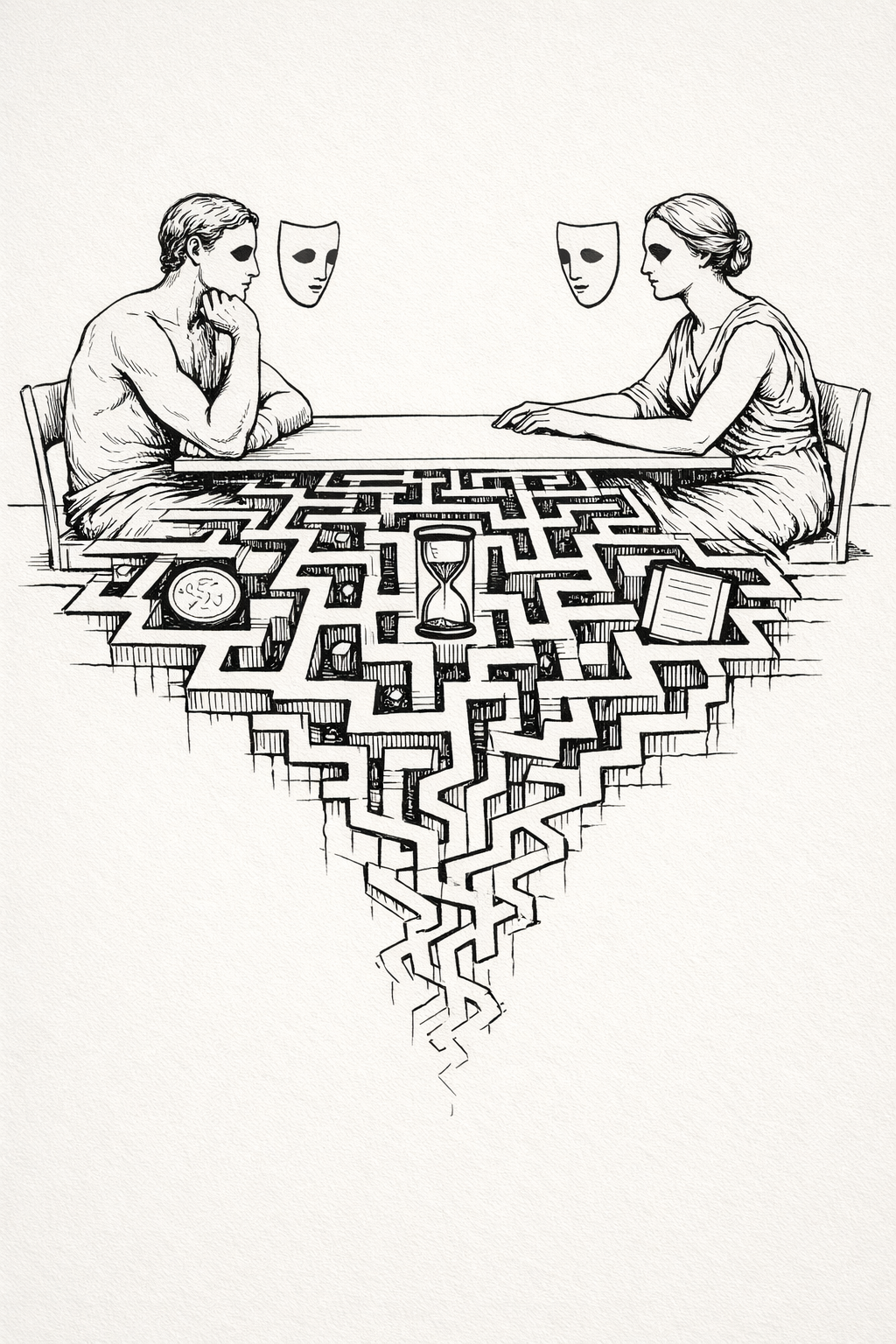

Surrealist ink line drawing in Dali distillation style. Core visual metaphor: two classical human figures seated across from each other at a minimal table, but each figure wears a simple theatrical mask that floats slightly disconnected from their face. Between them, the table surface transforms into a branching maze of pathways that extends toward the viewer, becoming progressively more complex before dissolving into negative space.

Composition: 70% negative space, bilateral symmetry broken by the asymmetric maze. Figures occupy upper third, maze extends downward. Small precise icons (coin, hourglass, document) float trapped within different maze sections, representing competing priorities.

Surreal device: metamorphosis (table becoming maze) and detachment (masks floating above faces). Line quality: confident continuous strokes, hairline to thin weight, open contours where masks meet faces. Minimal cast shadows beneath figures and table only. Monochrome black ink on off-white paper with hand-drawn imperfections: minor ink pooling where maze lines intersect, micro-wobble in mask contours.

No text, no labels, no background beyond single horizon line suggesting minimal ground plane.

ONE-SENTENCE SUMMARY: "I asked a buyer two probability questions about purchasing software, and the gap between their answers explained the entire enterprise sales market."